Plasmalogen analysis often appears straightforward until a team has to defend the final data package. At that point, the real technical questions emerge. Are you measuring a lipid class, a molecular species, or an evidence-tiered subtype-sensitive assignment? Are "P-” calls supported by the workflow, or are they only software-level annotations? Does the study need relative comparison, response-normalized reporting, or a fully calibrated concentration workflow? And can the final dataset withstand review by a scientist or bioinformatics lead who cares about annotation confidence, QC behavior, raw exports, and method transparency? Current reviews and protocols agree that plasmalogen and broader ether-lipid analysis is analytically valuable but nontrivial, especially when distinguishing plasmenyl from plasmanyl species and when converting LC-MS/MS signals into defensible quantitative outputs.

Analytical Goal First: What Resolution Do You Need (Class vs Species vs Isomer-Sensitive)?

Before discussing HILIC, reversed-phase LC, fragmentation mode, HRMS, or targeted acquisition, define the resolution claim the project needs to make. Reviews of plasmalogen analytics consistently show that the workflow burden rises when a project moves from class-level comparison to molecular-species interpretation and rises again when subtype-sensitive assignment becomes part of the output.

For most RUO projects, the resolution ladder looks like this:

1. Class-level or subclass-focused question

Example: Do ethanolamine plasmalogens shift across conditions?

A class-oriented workflow may be enough when the output is comparative and the study does not need subtype-sensitive interpretation.

2. Species-level question

Example: Which PE(P-) or PC(P-) species change, and how reproducibly?

This usually requires more chromatographic control, cleaner integration, and more transparent annotation logic than a class-only design.

3. Isomer-sensitive or subtype-sensitive question

Example: Can this workflow support evidence-tiered plasmenyl vs plasmanyl interpretation for selected species?

This is where separation strategy, fragment logic, standards coverage, and reporting discipline become much more important. Review-level sources support this as a workflow-dependent challenge rather than a universally solved one.

A common early mistake is to over-design the method before defining the real research claim. That produces one of two failures. The first is over-engineering: the team requests maximum detail for a study that only needs directional comparison, which reduces throughput and inflates cost and review burden. The second is under-resolution: the team chooses a fast comparative workflow and later interprets the output as though it supported subtype-sensitive calls. Both errors come from confusing method complexity with claim validity.

Readers who want a concise terminology foundation before method selection can start with plasmalogen basics and measurement levels.

Figure 1. Mapping research questions to analytical resolution in plasmalogen LC-MS/MS.

Figure 1. Mapping research questions to analytical resolution in plasmalogen LC-MS/MS.

This figure shows how research intent determines whether the workflow should be optimized for class-level comparison, species-resolved profiling, or evidence-tiered subtype-sensitive interpretation, and how method burden and QC burden increase with each step.

Decision table: analytical goal vs strategy vs main risk

| Analytical goal | Recommended analytical posture | What the method must support | Main risk if underdesigned |

|---|---|---|---|

| Class/subclass trend | Faster comparative LC-MS workflow | Stable class grouping and reproducible comparative signal | Species-level interpretation inferred from class data |

| Species-resolved profiling | LC-MS/MS with stronger chromatographic and review control | RT consistency, fragment-aware review, reproducible peak integration | Overcalling exact identity from insufficient evidence |

| Subtype-sensitive assignment | Evidence-tiered workflow | Combined chromatographic, MS/MS, and reference support | False plasmenyl/plasmanyl calls from annotation defaults |

In practice, that often means aligning the project with a broad lipid profiling workflow when discovery coverage matters, a predefined analyte panel with tighter quantitative control when the target list is already known, or a matrix-tailored RUO method setup when the study needs custom evidence thresholds or modified reporting fields.

Plasmenyl vs Plasmanyl: Why It's Hard and What Evidence You Need

The structural distinction is clear in chemistry: plasmenyl lipids contain a vinyl ether linkage at sn-1, whereas plasmanyl lipids contain an alkyl ether linkage at sn-1. The analytical challenge is that a clear structural difference does not automatically become a clear assignment in every LC-MS/MS workflow. Review sources describe this as a recurring issue in ether-lipid analysis because chromatographic overlap, workflow-dependent fragmentation behavior, and incomplete reference coverage can all weaken assignment confidence.

For most RUO projects, subtype-sensitive interpretation should be treated as evidence-tiered assignment rather than full structural confirmation unless orthogonal support is explicitly included. That framing is more accurate, more reviewable, and better aligned with current reporting discipline.

What evidence types are useful?

The most defensible workflows usually combine several evidence categories rather than relying on one feature.

Chromatographic behavior.

In suitable workflows, retention behavior can support interpretation, especially when reversed-phase conditions help separate related species in a consistent way. That does not make retention behavior a standalone proof, but it can add a useful evidence layer when paired with MS/MS and reference logic. Review-level support for this point exists, but it should be treated as a strong workflow option rather than a universal rule.

MS/MS behavior.

Fragment information can strengthen subclass interpretation, but only within the context of acquisition mode, adduct chemistry, lipid class, and review rules. Fragment evidence is useful when the method was designed to generate interpretable fragments for that purpose, not simply because tandem MS was collected.

Standards or reference support.

A practical limitation in ether-lipid analytics is incomplete commercially available standard coverage across diverse ether species. That makes transparent reporting more important, because many projects work with partial reference support rather than full species-by-species confirmation.

Explicit annotation confidence.

The final report should distinguish between confirmed, evidence-supported, and lower-confidence assignments. The lipidomics reporting checklist reinforces the need to represent identification level honestly instead of allowing implication to substitute for evidence.

A common failure mode in outsourced or internal screening studies is that the final table labels species as P- or O- without disclosing how that call was made. For a data-review lead, that is a major red flag. The question is not whether a software package recognized a candidate. The question is whether the workflow generated enough evidence to support the final label.

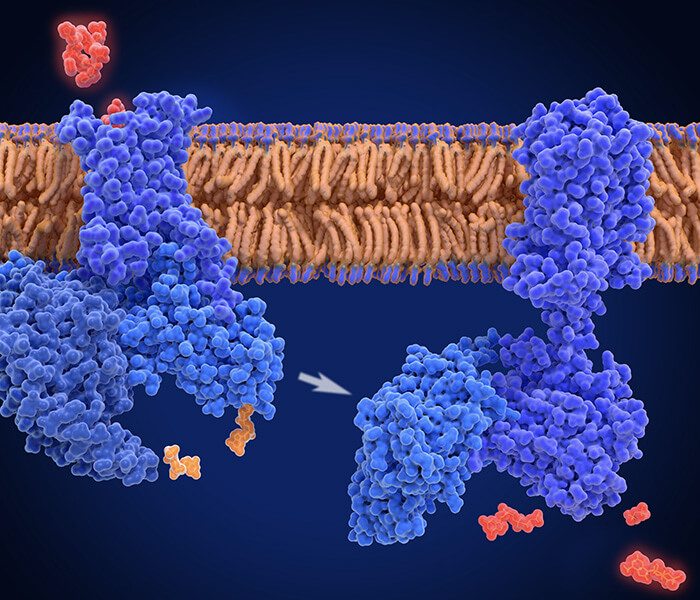

Figure 2. Evidence chain for plasmenyl vs plasmanyl interpretation.

Figure 2. Evidence chain for plasmenyl vs plasmanyl interpretation.

This figure contrasts the two ether linkages and visualizes why subtype-sensitive interpretation should rely on combined evidence from chromatographic behavior, MS/MS support, and reference-aware annotation rather than a single software-driven call.

Eight evidence fields that should appear in the report

For any project that makes subtype-sensitive claims, the report should disclose at least:

- Lipid nomenclature used in the final table

- Whether subtype wording reflects confirmed or evidence-supported assignment

- LC mode and column chemistry

- Acquisition type and polarity

- Fragment or transition logic used in review

- RT behavior used in interpretation, if applicable

- Standards or internal-standard coverage relevant to the analyte class

- Annotation confidence tier or decision rule

These fields are not administrative extras. They are the minimum needed to distinguish a defensible call from a convenient label. This is also where normalized peak-table preparation for downstream review and statistical review of feature stability and group separation become useful if the downstream team needs auditable processed outputs rather than a summary-only report.

LC Strategy: Separation Choices and When HILIC Helps

The LC question should always be framed as what the chromatography needs to accomplish. HILIC and normal-phase-like approaches are often useful when class organization or headgroup-driven separation is the priority. Reversed-phase LC is often more helpful when molecular-species behavior and retention-based evidence matter more. That distinction appears repeatedly in protocols and review discussions of lipidomics and ether-lipid analysis.

When HILIC helps

HILIC can be attractive when the project needs:

- Efficient class-aware separation

- Comparatively high-throughput profiling

- A practical route for relative plasmalogen measurement in defined matrices

- Clean grouping of related headgroup classes before deeper review

The 2023 plasmalogen protocol based on high-resolution MS presents HILIC as a rapid route for identifying and quantifying relative levels of ethanolamine and choline plasmalogens in several matrix types. That is useful support for HILIC in appropriately framed RUO projects, but not evidence that HILIC is automatically best for every subtype-sensitive task.

Where HILIC can become limiting

When a project needs stronger species-level or subtype-sensitive support, class-wise elution alone may not be enough. Review-level sources note that some HILIC-style strategies provide less opportunity to separate plasmanyl and plasmenyl species compared with workflows built around retention behavior at the molecular-species level. That should be treated as a method-selection consideration, not as a universal prohibition.

When reversed-phase earns its keep

Reversed-phase LC becomes more attractive when the workflow needs:

- Better control of species-level separation

- Retention behavior as part of the evidence stack

- Stronger handling of chain-length and unsaturation-related separation

- More confidence for targeted review of selected candidate species

The strongest way to state the evidence is this: review sources support RP retention behavior as a useful aid in plasmanyl/plasmenyl interpretation in suitable workflows, but not as a standalone or universally sufficient proof layer.

Matrix matters more than teams often admit

Chromatographic conditions are not portable templates. Matrix composition affects ion suppression, recovery, background complexity, and coelution risk. Protocol-level guidance for accurate annotation and semi-absolute quantification explicitly ties extraction choice, acquisition strategy, and internal-standard design to the native lipidome under study.

Matrix-to-strategy table

| Matrix profile | Separation priority | Often suitable starting point | Main risk |

|---|---|---|---|

| Lower complexity with predefined targets | Throughput and reproducibility | Targeted LC-MS/MS with class-aware design | Assuming subtype certainty from targeted signals alone |

| Moderate complexity with species profiling goals | Species separation and annotation control | RP LC-MS/MS with evidence-tiered review | Overcalling identity from limited fragments |

| Higher complexity exploratory profiling | Broad coverage and transparent confidence tiers | Untargeted lipidomics with explicit reporting rules | Treating exploratory output as confirmation-grade evidence |

Where broader phospholipid context matters, a glycerophospholipid-focused analytical workflow can be a sensible fit. When discovery breadth is the main goal, an exploratory lipidomics workflow with confidence-tier reporting is usually more honest than a falsely precise targeted panel. And once the feature table is stable enough for biological interpretation, pathway-level organization of lipid changes can help with downstream synthesis.

Quantification Strategy: Relative vs Absolute (and What Internal Standards Solve)

The right question is not "Is absolute quantification better?” The right question is "What kind of number does the project need to support?” Relative quantification is often appropriate for prioritization, trend analysis, and comparative screening-stage work. Absolute quantification becomes more valuable when the project needs concentration values, calibrator-defined response modeling, and stronger cross-run comparability. Protocols for accurate annotation and semi-absolute quantification, together with newer reporting guidance, support treating these as different output claims rather than a simple quality hierarchy.

When relative quantification is enough

Relative quantification is usually sufficient when the study aims to:

- Compare groups or conditions

- Prioritize responsive species

- Support screening-stage go/no-go choices

- Build a candidate list for later targeted follow-up

Done well, relative quantification is still rigorous. It requires reproducible extraction, stable acquisition, appropriate normalization, pooled QC review, and honest annotation boundaries. The 2023 HILIC-based plasmalogen protocol is a good example of a method framed around relative-level quantification rather than universal concentration claims.

When absolute quantification is worth the extra burden

Absolute quantification is more justified when the project requires:

- Reported concentration values

- Calibration curves and defined linear range

- Stronger cross-batch comparability

- Tighter acceptance criteria for outsourcing deliverables

That rigor comes with real overhead: calibrator planning, response-model review, matrix effects, and more explicit QC interpretation. It should therefore be requested because the project needs that claim, not because it sounds stronger.

What internal standards actually solve

Internal standards help control extraction variability, injection variability, ion suppression, and drift across the run. But they do not automatically transform a comparative workflow into a fully absolute workflow. Protocol guidance explicitly ties quantitative design to lipidome-specific internal-standard mixtures and acquisition logic, which is a more nuanced claim than "add standards and the method becomes absolute.”

| Claim in final table | Minimum support needed | What not to claim |

|---|---|---|

| Relative change | QC-stable normalized response | Concentration |

| Response-normalized comparison | Class-relevant internal standards and batch rules | Absolute amount |

| Absolute concentration | Calibrators, response model, QC acceptance | Subtype certainty without evidence |

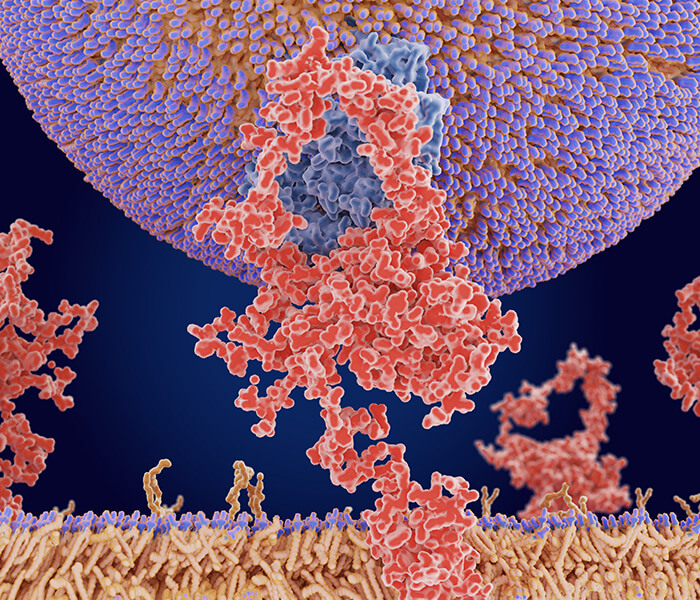

Figure 3. Relative vs absolute quantification in plasmalogen LC-MS/MS.

Figure 3. Relative vs absolute quantification in plasmalogen LC-MS/MS.

This figure compares the reporting logic, standards burden, and QC layout associated with relative and absolute strategies, highlighting internal standards as a correction tool rather than a substitute for calibration.

Strategy table: what each quantification mode needs

| Strategy | Usually sufficient for | Additional support needed | Common pitfall |

|---|---|---|---|

| Relative quantification | Trend analysis, prioritization, screening support | Stable normalization, pooled QC, transparent feature review | Presenting fold change as amount |

| Response-normalized comparison | Comparative profiling with stronger control | Class-aware standards and batch rules | Treating response normalization as full calibration |

| Absolute quantification | Concentration claims and tighter comparability | Calibrators, response model, linear range, QC acceptance | Reporting concentration without complete calibration evidence |

QC layout is part of the method, not an appendix

Pooled QC injections, blanks, study-sample spacing, and calibrator placement are all part of whether a quantitative result can be trusted. The current lipidomics reporting checklist treats QC and transparency as core reporting elements, not optional supplements.

For practical review, ask for these QC fields:

- Blank type and frequency

- Pooled QC composition and injection spacing

- Replicate strategy

- Internal-standard list and class coverage

- Drift monitoring method

- Peak acceptance rules

- Missing-value handling rule

- Confidence flagging for low-quality features

Once the feature table is stable, multivariate pattern review for group separation and drift inspection and downstream bioinformatic analysis for metabolomics-style data structuring can help convert a raw peak table into a more auditable decision package.

Readers comparing route options beyond quantification alone may also want targeted vs untargeted plasmalogen analysis.

Practical QC thresholds to request

Exact thresholds vary by platform, matrix, and project scope, so they should be agreed in the project brief rather than borrowed blindly from unrelated workflows. What matters most is not one universal cutoff, but whether the thresholds were declared before the final table was built and whether failures were handled consistently. Reporting-guideline sources support this threshold-and-disclosure mindset even when they do not prescribe one fixed numeric rule for every lipidomics design.

In practice, the best approach is to predeclare thresholds in the project brief, then flag or exclude features that fail them. That means the brief should specify how blank carryover, pooled-QC reproducibility, missingness, drift, and integration quality will be handled before interpretation starts. Once those rules are fixed, the final table can clearly separate accepted features, flagged features, and excluded features. This makes review faster and avoids the common problem of retrofitting QC logic after the biological story is already forming.

Deliverables and acceptance framework

| Deliverable | What should be included | Minimum acceptance logic |

|---|---|---|

| Processed feature table | Feature ID, lipid name, adduct, m/z, RT, response value, confidence tier, QC flag | No unexplained missing core columns; confidence tiers visible |

| Evidence table for subtype-sensitive calls | RT note, fragment support note, standards/reference note, review comment | Claims matched to evidence tier; no unsupported subtype certainty |

| QC summary | Blank behavior, pooled QC behavior, drift summary, replicate summary, exclusion counts | Pass/fail or flag logic declared and applied consistently |

| Raw/export package | Vendor raw files and agreed export format for downstream processing | File completeness confirmed; sample IDs consistent across package |

| Method note | Extraction summary, LC mode, acquisition type, standards strategy, normalization rules | Enough detail to interpret the final table without guesswork |

A practical example helps. If a project asks for species-level comparative profiling with evidence-tiered subtype notes, the deliverables should include a processed table, a QC summary, and a method note that explains how subtype wording was assigned. If the project later requests concentration output for selected species, that should trigger a scope revision with calibrator design, revised QC review, and updated acceptance criteria rather than a cosmetic report edit.

When to Use This Workflow—and When Not to

Use a plasmalogen-focused LC-MS/MS workflow when:

- Ether-lipid biology is central to the RUO question

- Species-level interpretation matters more than total phospholipid abundance

- The team can state its annotation and quantification boundary clearly

- The output will be reviewed as a technical dataset, not just a narrative figure

Do not use a high-burden subtype-sensitive design when:

- The project only needs directional class-level trends

- Throughput or sample volume makes the extra evidence impractical

- The budget does not support the standards and QC burden

- The downstream decision does not depend on subtype-sensitive interpretation

Method discipline is often more valuable than adding complexity.

FAQ

1) Can LC-MS/MS always distinguish plasmenyl from plasmanyl lipids?

No. It is the main platform for this work, but subtype-sensitive interpretation remains workflow-dependent and often requires combined evidence rather than a single signal.

2) Is HILIC better than reversed-phase for plasmalogens?

Not universally. HILIC can be useful for class-aware and relative-level workflows, while reversed-phase is often more useful when species behavior or RT-based evidence is part of the interpretation goal.

3) Does high-resolution MS solve the subtype problem by itself?

No. HRMS strengthens measurement quality, but exact mass alone does not complete subtype-sensitive assignment.

4) When is relative quantification sufficient?

When the project needs comparative profiling, prioritization, or directional screening support rather than concentration reporting.

5) If internal standards are used, is the result automatically absolute?

No. Internal standards improve correction, but absolute concentration claims also require calibrators, response modeling, and explicit QC acceptance.

6) What should a data-review lead ask for in the final package?

Raw or agreed export files, processed feature tables, nomenclature rules, RT/adduct fields, fragment or transition support, standards mapping, QC summary, confidence tiers, and missing-value handling.

7) Is untargeted analysis enough for a plasmalogen project?

Sometimes, especially for discovery-stage profiling. But untargeted output should not be presented as confirmation-grade evidence unless the workflow explicitly supports that interpretation level.

8) What is the biggest project-design mistake?

Failing to define the claim boundary at the start. Most downstream confusion comes from expecting subtype-sensitive or absolute claims from a workflow designed only for comparative profiling.

References

- Koch J, Watschinger K, Werner ER, Keller MA. 2022. Tricky Isomers—The Evolution of Analytical Strategies to Characterize Plasmalogens and Plasmanyl Ether Lipids. Frontiers in Cell and Developmental Biology 10:864716. DOI: 10.3389/fcell.2022.864716. https://doi.org/10.3389/fcell.2022.864716

- Vasku G, Acar N, Berdeaux O. 2023. Rapid Analysis of Plasmalogen Individual Species by High-Resolution Mass Spectrometry. In: Lipidomics: Methods and Protocols. Methods in Molecular Biology. DOI: 10.1007/978-1-0716-2966-6_22. https://doi.org/10.1007/978-1-0716-2966-6_22

- Wölk M, Fedorova M. 2025. Recommendations for Accurate Lipid Annotation and Semi-absolute Quantification from LC-MS/MS Datasets. Methods in Molecular Biology 2855:269-287. DOI: 10.1007/978-1-0716-4116-3_16. https://doi.org/10.1007/978-1-0716-4116-3_16

- Buré C, Ayciriex S, Testet E, Schmitter JM. 2013. A single run LC-MS/MS method for phospholipidomics. Analytical and Bioanalytical Chemistry 405(1):203-213. DOI: 10.1007/s00216-012-6466-9. https://doi.org/10.1007/s00216-012-6466-9

- Cajka T, Fiehn O. 2016. Toward Merging Untargeted and Targeted Methods in Mass Spectrometry-Based Metabolomics and Lipidomics. Analytical Chemistry 88(1):524-545. DOI: 10.1021/acs.analchem.5b04491. https://doi.org/10.1021/acs.analchem.5b04491

- Liebisch G, Ahrends R, Arita M, et al. 2024. The lipidomics reporting checklist: a framework for transparency of lipidomic experiments and repurposing resource data. Journal of Lipid Research 65(7):100621. DOI: 10.1016/j.jlr.2024.100621. https://doi.org/10.1016/j.jlr.2024.100621

- Dean JM, Lodhi IJ. 2018. Structural and functional roles of ether lipids. Protein & Cell 9(2):196-206. DOI: 10.1007/s13238-017-0423-5. https://doi.org/10.1007/s13238-017-0423-5