Meta Intent: A comprehensive technical resource for bioanalytical scientists developing robust LC-MS/MS methods for therapeutic protein quantitation in complex biological matrices. Covers signature peptide thermodynamics, SIL internal standard physics, and matrix effect mitigation strategies.

The Physics of the "Bottom-Up" Bioanalytical Paradigm

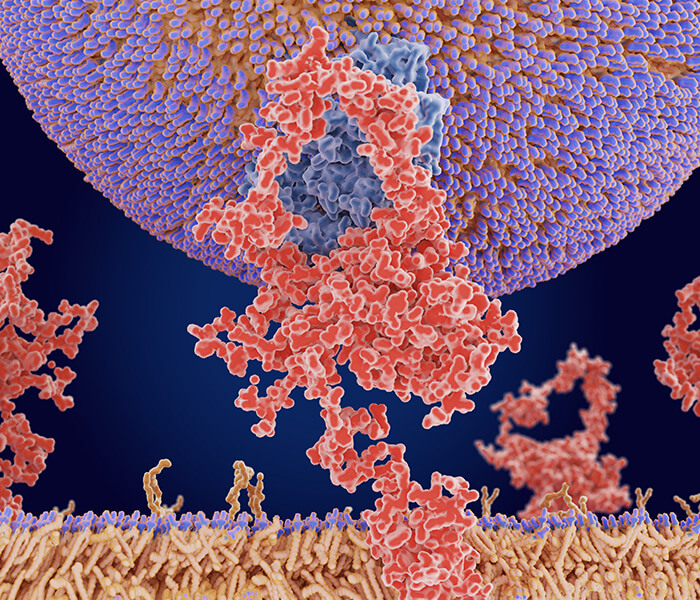

Protein biologics present a unique analytical challenge that small molecules simply do not share. A monoclonal antibody (mAb) can exceed 150 kDa and contain over 1,300 amino acid residues folded into a precise three-dimensional structure stabilized by disulfide bridges, hydrogen bonds, and hydrophobic packing. Placing this intact macromolecule directly into a triple quadrupole mass spectrometer is not a viable path to sensitive quantitation. The instrument's mass range, the molecule's poor electrospray ionization efficiency, and the sheer complexity of isotopic distributions all conspire against direct detection.

The solution—universally adopted in bioanalytical laboratories—is the "bottom-up" approach. The intact biologic is enzymatically cleaved into a collection of smaller peptides. One or two of these peptides, carefully selected and rigorously validated, serve as surrogates that report the concentration of the parent protein. The logic is sound: if digestion proceeds to completion and the surrogate peptide is stoichiometrically released, measuring the peptide tells you exactly how much protein was originally present.

But the word "if" carries enormous weight here. The transition from intact globular protein to quantifiable peptide fragment is not a trivial chemical reaction. It is a cascade of physical events governed by protein unfolding energetics, enzyme accessibility kinetics, and peptide stability thermodynamics. Understanding this cascade is the foundation upon which robust quantitative methods are built.

Proteolysis Kinetics: Beyond the Standard 1:20 Ratio

Most standard operating procedures call for a trypsin-to-substrate ratio of 1:20 (w/w) and an incubation period of 4 to 16 hours at 37°C. For many soluble, well-behaved proteins, this recipe works adequately. However, research biologics—particularly monoclonal antibodies, Fc-fusion proteins, and cysteine-knot scaffolds—are anything but "well-behaved." Their tertiary structures are rigid. Their disulfide-rich cores are resistant to denaturation. Trypsin, a serine protease that cleaves specifically at the carboxyl side of lysine and arginine residues, cannot access buried cleavage sites until the protein partially unfolds.

The efficiency of proteolysis is therefore a function of the enzyme-to-substrate ratio, the incubation time, and critically, the extent of protein denaturation. Increasing the enzyme concentration beyond 1:20 can accelerate digestion but introduces the risk of chymotryptic activity—a known contaminant in trypsin preparations that cleaves at hydrophobic residues and generates unwanted peptide species. Conversely, extending digestion time beyond 16 hours increases the likelihood of nonspecific cleavage and peptide degradation. The methionine residue in your signature peptide may slowly oxidize. The asparagine in an NG motif may deamidate. Both modifications shift the peptide's mass and retention time, compromising quantitative accuracy. For robust results, laboratories often rely on standardized Digestion (in-gel or in-solution) protocols that minimize variability across samples.

For rigid biologics such as mAbs, the use of Lys-C as a complementary or alternative protease offers distinct advantages. Lys-C retains activity under strongly denaturing conditions (up to 8 M urea), whereas trypsin is rapidly inactivated. A two-step digestion protocol—Lys-C in 8 M urea followed by dilution and trypsin addition—often achieves more complete digestion of disulfide-protected regions than trypsin alone. The choice of protease is therefore not a matter of habit but a calculated decision based on the structural rigidity of the target biologic.

Figure 1. A 3D surface plot illustrating the effect of denaturant concentration (Urea, 0–8 M) and enzyme-to-substrate ratio (1:100 to 1:10) on digestion completeness (peptide yield). Inset molecular models show a partially unfolded mAb Fc domain with trypsin (inaccessible) versus Lys-C (accessible) superimposed on the cleavage site, visualizing the energy barrier that must be overcome for complete proteolysis.

Figure 1. A 3D surface plot illustrating the effect of denaturant concentration (Urea, 0–8 M) and enzyme-to-substrate ratio (1:100 to 1:10) on digestion completeness (peptide yield). Inset molecular models show a partially unfolded mAb Fc domain with trypsin (inaccessible) versus Lys-C (accessible) superimposed on the cleavage site, visualizing the energy barrier that must be overcome for complete proteolysis.

Denaturation Energetics: Urea versus RapiGest

Before an enzyme can cleave, the protein must unfold. The energy required to disrupt the non-covalent interactions maintaining the native state is supplied by chemical denaturants. Urea and guanidine hydrochloride are the traditional workhorses. They function by competing for hydrogen bonds and weakening the hydrophobic effect that drives protein folding. At concentrations of 6–8 M, most globular proteins exist as random coils with all cleavage sites exposed.

However, the denaturant that facilitates digestion also introduces downstream complications. Urea at high concentrations can carbamylate lysine residues and the N-terminus of peptides, adding +43 Da mass shifts that confound quantification. Guanidine is incompatible with trypsin activity unless extensively diluted. And both reagents must be removed or diluted prior to LC-MS/MS analysis because they suppress electrospray ionization and foul chromatographic columns.

Acid-labile surfactants such as RapiGest SF (sodium deoxycholate derivative) offer an elegant alternative. These detergents solubilize hydrophobic protein domains and promote unfolding at concentrations as low as 0.1% (w/v). Critically, they do not inhibit trypsin and can be cleaved by acidification after digestion into insoluble products that are removed by centrifugation. The resulting peptide mixture is free of surfactant contamination and ready for direct injection. For sensitive downstream applications like Protein Quantification or Absolute Quantification (AQUA), the cleanliness of the peptide digest directly impacts the lower limit of quantitation.

The choice between urea and RapiGest is fundamentally a trade-off between denaturation power and sample cleanliness. Urea is inexpensive and universally available but requires desalting or dilution. RapiGest streamlines the workflow but adds cost and may not fully unfold exceptionally stable proteins. For most research biologic applications—especially those requiring high-throughput analysis of plasma or serum samples—the clean peptide background provided by RapiGest digestion justifies its use.

Signature Peptide Selection: Thermodynamic Optimization

If the bottom-up approach is the foundation of protein quantitation, then the signature peptide is the cornerstone. Selecting the wrong peptide dooms the method before a single sample is injected. The peptide must be unique to the target protein, efficiently released during digestion, stable throughout sample handling, and fly well in the electrospray source. These requirements are not independent; they interact through the peptide's physicochemical properties.

In Silico Selection Logic: Bypassing the "Hot-Spot" Minefield

The first filter is uniqueness. A BLAST search of the candidate peptide sequence against the proteome of the relevant species (human, mouse, rat, or cynomolgus monkey) confirms that the peptide appears only in the target biologic. Cross-reactivity with endogenous proteins generates background signal that degrades the lower limit of quantitation (LLOQ). This is particularly critical for human mAb quantitation in human serum, where the endogenous immunoglobulin background can be orders of magnitude higher than the therapeutic concentration. Services like Shotgun Protein Identification often rely on this principle of unique peptide identification for confident protein inference.

The second filter, and one that separates robust methods from fragile ones, is post-translational modification (PTM) hotspot prediction. Certain sequence motifs are inherently unstable. Asparagine followed by glycine (NG) undergoes rapid deamidation at neutral pH, converting to aspartic or isoaspartic acid. Methionine residues oxidize to methionine sulfoxide upon exposure to atmospheric oxygen or during electrospray ionization. N-terminal glutamine cyclizes to pyroglutamate. Any peptide containing these motifs will exist as a mixture of modified and unmodified forms, splitting the signal and reducing quantitative precision.

Modern in silico tools—such as PTM prediction algorithms integrated into Skyline, or commercial APIs like Levitate Bio's PTM Predictor—can scan candidate peptide sequences and flag these hotspots before a single digestion is performed. The analyst can then select an alternative peptide from a different region of the protein sequence, one that lacks NG motifs, avoids methionine, and shows low predicted susceptibility to oxidation based on its three-dimensional solvent accessibility. For proteins with complex disulfide patterns, accurate Peptide Mapping provides the empirical foundation for confirming that the selected surrogate peptide is indeed released in a 1:1 stoichiometry with the parent molecule.

Figure 2. A 3D scatter bubble plot showing candidate tryptic peptides from a representative mAb sequence distributed across three axes: Hydrophobicity (GRAVY Score), ESI Flyability (Predicted Ionization Efficiency), and PTM Risk Score. Bubble size corresponds to peptide length (7–25 residues). The optimal region (high flyability, moderate hydrophobicity, low PTM risk) is highlighted in green, making the multi-criteria optimization of signature peptide selection immediately intuitive.

Figure 2. A 3D scatter bubble plot showing candidate tryptic peptides from a representative mAb sequence distributed across three axes: Hydrophobicity (GRAVY Score), ESI Flyability (Predicted Ionization Efficiency), and PTM Risk Score. Bubble size corresponds to peptide length (7–25 residues). The optimal region (high flyability, moderate hydrophobicity, low PTM risk) is highlighted in green, making the multi-criteria optimization of signature peptide selection immediately intuitive.

Physicochemical Constraints: Balancing Retention and Ionization

Once uniqueness and stability are assured, the peptide's chromatographic and mass spectrometric behavior must be optimized. Two properties dominate this optimization: hydrophobicity and proton affinity.

Hydrophobicity, often quantified by the GRAVY score (Grand Average of Hydropathy), dictates retention on a reversed-phase C18 column. Peptides that are too hydrophilic (GRAVY < -1.0) elute near the void volume, co-eluting with salts and polar matrix components that cause severe ion suppression. Peptides that are too hydrophobic (GRAVY > +1.5) stick tenaciously to the column, requiring high organic solvent concentrations for elution and exhibiting broad, tailing peaks. The optimal GRAVY window for LC-MS/MS quantitation is approximately -0.5 to +1.0. Peptides in this range elute in the middle of the acetonitrile gradient, well separated from the early suppression zone and the late-eluting phospholipid interference.

Proton affinity determines how efficiently the peptide accepts a proton during electrospray ionization. Basic residues—arginine, lysine, and histidine—are the primary protonation sites. Tryptic peptides, by definition, terminate in either lysine or arginine (unless they represent the protein's C-terminus). This C-terminal basic residue is the dominant driver of ESI response. However, internal basic residues can further enhance ionization. A peptide containing two arginine residues will generally produce a stronger signal than a peptide with one arginine and one lysine. Conversely, acidic residues (aspartic acid, glutamic acid) suppress ionization in positive ion mode and should be minimized. Advanced SRM & MRM method development takes these ionization properties into account when selecting optimal transitions.

The selection of a signature peptide is therefore an exercise in multi-parameter optimization. The analyst must balance uniqueness, stability, hydrophobicity, and ionization efficiency. When multiple candidate peptides satisfy all criteria, the one with the highest MS/MS spectral quality—producing abundant y-type fragment ions with low background—is chosen for method development. De Novo Peptides/Proteins Sequencing capabilities can be invaluable when working with novel biologics where reference sequences are incomplete.

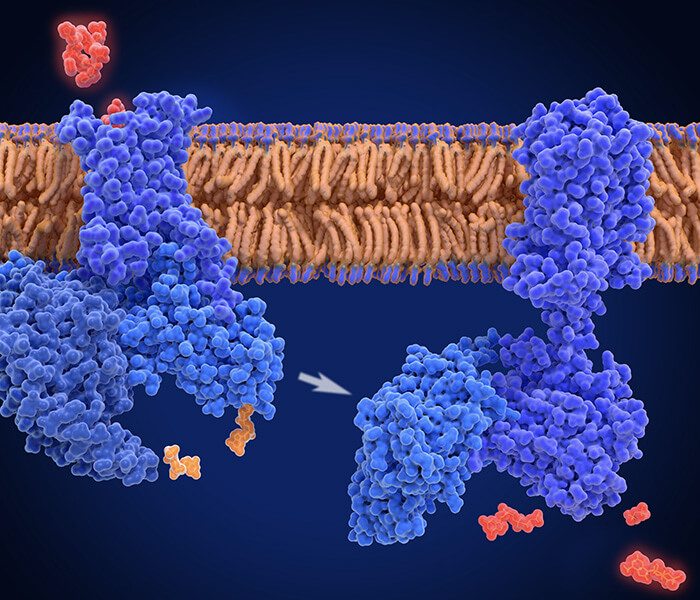

Internal Standardization: SIL-Protein versus SIL-Peptide Physics

No analytical method is complete without an internal standard. The internal standard corrects for variability introduced at every step of the workflow: sample handling, extraction, digestion, injection, ionization, and detection. But what, exactly, is being corrected depends critically on the chemical form of the internal standard.

The SIL-Peptide Paradigm and Its Limitations

The most common approach in protein LC-MS/MS is the use of a stable isotope-labeled (SIL) peptide—a synthetic version of the signature peptide in which one or more amino acids are enriched in 13C and 15N. This labeled peptide is chemically identical to the native peptide except for its mass, which is shifted by a known number of daltons (typically +6 to +10 Da). The SIL peptide is spiked into the sample at a known concentration immediately after digestion.

This strategy corrects for post-digestion variability—fluctuations in injection volume, ionization efficiency, and MS detection sensitivity. If the native peptide and SIL peptide co-elute, any variation in the LC-MS/MS system affects both species identically, and the ratio of their signals remains constant. This is a powerful correction and is the foundation of modern quantitative proteomics, including techniques like Label-free Quantification where internal standards are used for normalization.

But the SIL-peptide approach has a blind spot: it cannot correct for variability that occurs before the spike. If the protein extraction efficiency from serum is 60% in one sample and 75% in another, the SIL peptide—added after extraction—knows nothing of this difference. The final measured concentration will reflect this pre-analytical variability, degrading both accuracy and precision. For demanding applications, laboratories may turn to Parallel Reaction Monitoring (PRM) which offers improved selectivity but still requires careful internal standard strategy.

Figure 3. A side-by-side flowchart comparing two internal standardization strategies. Left panel (SIL-Peptide): The workflow shows sample preparation, digestion, SIL-peptide spike, and LC-MS/MS. A red "X" marks the uncorrected extraction and digestion steps. Right panel (SIL-Protein): The SIL-protein spike occurs at the very beginning, with green checkmarks indicating that every step from extraction to detection is corrected. The visual contrasts the "tracking" fidelity of the two approaches.

Figure 3. A side-by-side flowchart comparing two internal standardization strategies. Left panel (SIL-Peptide): The workflow shows sample preparation, digestion, SIL-peptide spike, and LC-MS/MS. A red "X" marks the uncorrected extraction and digestion steps. Right panel (SIL-Protein): The SIL-protein spike occurs at the very beginning, with green checkmarks indicating that every step from extraction to detection is corrected. The visual contrasts the "tracking" fidelity of the two approaches.

The SIL-Protein Advantage: Tracking from the Very Beginning

A stable isotope-labeled intact protein (u-13C/15N-labeled biologic) provides a more comprehensive correction. Because the labeled protein is spiked into the sample at the very beginning—before any extraction, enrichment, or digestion steps—it experiences every source of variability that the native protein experiences. If the extraction efficiency is 60%, both the native and labeled proteins are extracted with 60% efficiency. If the digestion is incomplete, both proteins are incompletely digested to the same extent. The SIL-protein acts as a true "tracker" that mirrors the fate of the analyte from sample collection to final detection.

The physics underlying this advantage is straightforward: variance in the measured ratio (native / labeled) is minimized when the two species are as chemically similar as possible and introduced at the earliest possible stage. SIL-peptide internal standards correct for variance in the final analytical steps. SIL-protein internal standards correct for variance in the entire workflow. For applications demanding the highest quantitative rigor—such as pharmacokinetic studies supporting regulatory submission—the SIL-protein approach is increasingly considered the gold standard. This is particularly true for complex matrices where Plasma/Serum Proteomics Service workflows must contend with high background and variable recovery.

Immunocapture Thermodynamics: The Hybrid LBA/LC-MS Approach

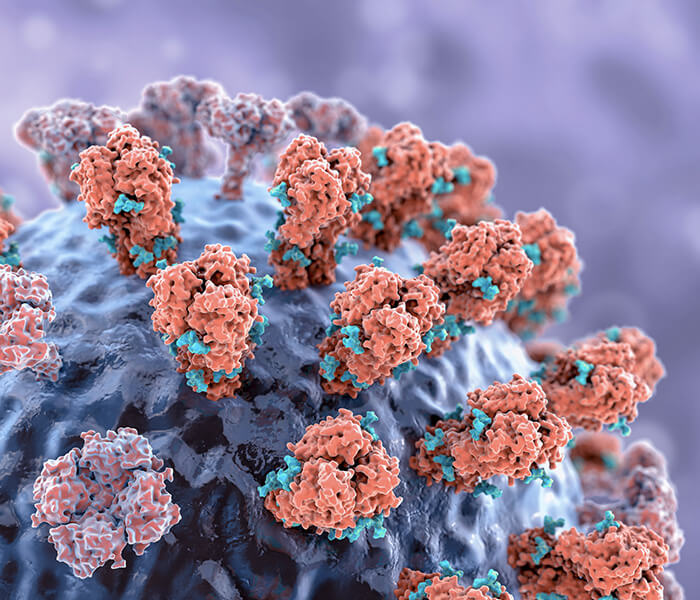

For many research biologics, the concentrations of interest in plasma or serum are exceedingly low—nanograms per milliliter or even picograms per milliliter. Direct digestion of a 10 µL serum aliquot followed by LC-MS/MS simply lacks the sensitivity to reach these levels. The solution is immunocapture: using an antibody or antigen to selectively enrich the target biologic from the complex matrix before digestion.

This is a hybrid approach that marries the specificity of ligand-binding assays (LBA) with the selectivity of LC-MS/MS. A biotinylated capture reagent—often the target antigen for an mAb therapeutic, or an anti-idiotypic antibody—is immobilized on a streptavidin-coated magnetic bead. When serum is incubated with these beads, the target biologic binds specifically while thousands of abundant serum proteins (albumin, IgG, transferrin) are washed away. The enriched biologic is then digested on-bead and analyzed. Techniques like Co-immunoprecipitation/mass spectrometry (co-IP/MS) operate on similar principles of affinity enrichment prior to MS detection.

The efficiency of this enrichment is governed by the thermodynamics of the antibody-antigen binding equilibrium. The interaction is characterized by an association rate constant (k_on) and a dissociation rate constant (k_off). The ratio Kd = k_off / k_on is the equilibrium dissociation constant—a measure of binding affinity. For efficient capture, Kd should be in the low nanomolar to picomolar range.

But affinity is not the only consideration. The kinetics of binding matter for assay robustness. A capture reagent with a slow k_on will require long incubation times to reach equilibrium. A capture reagent with a fast k_off will lose bound analyte during the wash steps, reducing recovery. The optimal capture reagent combines fast association, slow dissociation, and high specificity. For researchers requiring quantitative binding data, Biacore Service provides surface plasmon resonance measurements that directly characterize these kinetic constants.

Figure 4. A 3D molecular surface representation of an antibody Fab fragment binding to its cognate antigen. Arrows labeled "k_on" (association) and "k_off" (dissociation) are overlaid on the structure. A small inset shows a saturation binding curve with axes labeled "[Ligand]" and "Bound", emphasizing the dynamic equilibrium that governs immunocapture enrichment and method sensitivity.

Figure 4. A 3D molecular surface representation of an antibody Fab fragment binding to its cognate antigen. Arrows labeled "k_on" (association) and "k_off" (dissociation) are overlaid on the structure. A small inset shows a saturation binding curve with axes labeled "[Ligand]" and "Bound", emphasizing the dynamic equilibrium that governs immunocapture enrichment and method sensitivity.

Advanced LC-MS/MS Quantitation: MRM Design and Matrix Effects

Once the signature peptide is selected and the internal standardization strategy is established, the analytical method moves into the domain of the triple quadrupole mass spectrometer. This instrument, operating in selected reaction monitoring (SRM) or multiple reaction monitoring (MRM) mode, is the workhorse of targeted protein quantitation. Its power lies in its ability to filter out chemical noise and detect only the peptide of interest with extraordinary selectivity. But that selectivity must be engineered—transition by transition, parameter by parameter.

SRM/MRM Transition Optimization: Maximizing Signal-to-Noise

An MRM transition is defined by the mass-to-charge ratio (m/z) of the precursor peptide ion and the m/z of a specific fragment ion produced by collision-induced dissociation (CID). For a typical tryptic peptide carrying two or three positive charges, the precursor ion is the doubly or triply charged species, often denoted as [M+2H]2+ or [M+3H]3+. The fragment ions are predominantly y-type ions—those containing the C-terminus of the peptide—because the basic residue at the C-terminus retains the proton during fragmentation.

Selecting the optimal transitions is an empirical process guided by a few physical principles. First, the precursor ion should be the most abundant charge state observed in full-scan MS. Second, fragment ions should be selected based on their intensity and freedom from interference. Typically, three to five transitions are monitored per peptide: one serves as the quantifier, and the others serve as qualifiers to confirm peptide identity through ion ratio consistency.

Collision energy (CE) is the critical parameter that governs fragmentation efficiency. Too little energy, and the precursor ion passes through the collision cell intact. Too much energy, and the peptide shatters into small, non-specific fragments that lack diagnostic value. The optimal CE for a given peptide depends on its mass and charge state. A common practice is to perform a CE ramp—acquiring data at incrementally increasing collision energies—and select the value that maximizes the signal-to-noise ratio of the desired fragment ion. Modern instruments can predict optimal CE values based on peptide sequence, but empirical verification remains essential. For laboratories developing methods for novel biologics, SRM & MRM service providers offer pre-optimized transitions and validated collision energies that accelerate method deployment.

Quantifying the Matrix Effect: Post-Column Infusion Mapping

No discussion of bioanalytical LC-MS/MS is complete without confronting the matrix effect. This phenomenon—most often manifesting as ion suppression—arises when co-eluting matrix components compete with the analyte for available protons during electrospray ionization. The result is a decrease in signal intensity that compromises sensitivity, accuracy, and precision. Phospholipids, salts, and polyethylene glycol are notorious suppressors.

The gold-standard experiment for visualizing the matrix effect is the post-column infusion study. A constant stream of the analyte (or a representative compound) is infused into the LC effluent via a tee fitting positioned after the analytical column. Simultaneously, a blank matrix extract is injected and subjected to the full chromatographic gradient. As matrix components elute, they suppress the infused analyte signal, creating a "negative chromatogram" that maps the zones of ion suppression.

This experiment yields a practical, actionable roadmap for method optimization. The analyst can see precisely where the early-eluting salt front causes suppression, where the late-eluting phospholipid envelope (typically between 80% and 95% organic solvent) quenches the signal, and—most importantly—where the suppression is minimal. The goal of chromatographic method development is then simple: adjust the gradient to move the analyte's retention time into a "safe window" free from suppression. This is the physical justification for investing time in high-resolution chromatography rather than relying solely on the mass spectrometer's selectivity.

Figure 5. A 3D visualization of a post-column infusion experiment for mapping matrix effects. The main element is a stylized LC chromatogram represented as a 3D peak landscape (time on X-axis, intensity on Y-axis). Overlaid on this landscape is a semi-transparent heatmap layer that shifts from green (no suppression) to yellow (moderate suppression) to red (severe ion suppression). A narrow vertical band in the middle is highlighted in bright green with a peptide icon, indicating the optimized elution window where the analyte avoids the red suppression zones caused by early-eluting salts and late-eluting phospholipids.

Figure 5. A 3D visualization of a post-column infusion experiment for mapping matrix effects. The main element is a stylized LC chromatogram represented as a 3D peak landscape (time on X-axis, intensity on Y-axis). Overlaid on this landscape is a semi-transparent heatmap layer that shifts from green (no suppression) to yellow (moderate suppression) to red (severe ion suppression). A narrow vertical band in the middle is highlighted in bright green with a peptide icon, indicating the optimized elution window where the analyte avoids the red suppression zones caused by early-eluting salts and late-eluting phospholipids.

Calibration Curve Linearity: Managing Dynamic Range and Detector Saturation

Absolute quantitation requires a calibration curve. Known concentrations of the reference standard (the intact biologic or a synthetic signature peptide) are spiked into a surrogate matrix—often the same biofluid as the study samples but devoid of endogenous analyte—and processed identically. The ratio of analyte peak area to internal standard peak area is plotted against concentration, and a regression model is fitted.

For most LC-MS/MS assays, a linear model with 1/x or 1/x² weighting provides the best fit across the dynamic range. The range itself is defined by the lower limit of quantitation (LLOQ) and the upper limit of quantitation (ULOQ) . The LLOQ is the lowest concentration at which the analyte can be measured with acceptable accuracy (typically plus or minus 20% bias) and precision (less than or equal to 20% CV). The ULOQ is the highest concentration that falls within the linear range of the detector.

But why does the curve stop being linear at high concentrations? The answer lies in the physics of detector saturation. In a triple quadrupole mass spectrometer, ions strike an electron multiplier or photomultiplier tube, generating a cascade of secondary electrons that produce a measurable current. At very high ion fluxes, the detector cannot replenish its charge state fast enough between ion arrivals. The response per ion decreases, and the calibration curve bends toward a plateau. This is not a failure of the chemistry; it is a fundamental limitation of the detection hardware.

Managing this phenomenon requires understanding the assay's intended use. For pharmacokinetic studies, the expected concentrations may span four to six orders of magnitude—from the Cmax peak shortly after dosing to the trough concentration weeks later. Diluting high-concentration samples into the linear range is a standard practice, but each dilution step introduces its own uncertainty. Alternatively, the analyst can reduce the injection volume or use a less sensitive MRM transition for high-concentration samples. For comprehensive protein quantification across wide dynamic ranges, Absolute Quantification (AQUA) workflows are designed with these dynamic range considerations in mind.

Figure 6. A 3D dual Y-axis graph illustrating the dynamic range and detector saturation physics in quantitative LC-MS/MS. The X-axis represents "Concentration (Log Scale)". The left Y-axis represents "Detector Response". The right Y-axis represents "Saturation Level". A smooth sigmoidal curve shows the calibration range from LLOQ to ULOQ. The linear portion (4–6 orders of magnitude) is highlighted in blue. At the high-concentration end, the curve flattens into a red-shaded plateau labeled "Detector Saturation". A small inset shows a simplified schematic of an electron multiplier with too many ions arriving simultaneously.

Figure 6. A 3D dual Y-axis graph illustrating the dynamic range and detector saturation physics in quantitative LC-MS/MS. The X-axis represents "Concentration (Log Scale)". The left Y-axis represents "Detector Response". The right Y-axis represents "Saturation Level". A smooth sigmoidal curve shows the calibration range from LLOQ to ULOQ. The linear portion (4–6 orders of magnitude) is highlighted in blue. At the high-concentration end, the curve flattens into a red-shaded plateau labeled "Detector Saturation". A small inset shows a simplified schematic of an electron multiplier with too many ions arriving simultaneously.

From Data to Decision: The Bioanalytical Report

The final output of an LC-MS/MS method is not a chromatogram but a concentration value—a number that informs critical decisions in drug development. That number carries with it an uncertainty budget accumulated from every step of the workflow: sample collection, storage stability, extraction recovery, digestion completeness, internal standard spiking, injection precision, ionization variability, and detector response.

A rigorous bioanalytical method validation, conducted according to guidelines such as ICH M10, quantifies each of these contributions. Accuracy and precision are assessed at multiple quality control (QC) levels spanning the calibration range. Dilution integrity is confirmed for samples exceeding the ULOQ. Matrix effects are evaluated in multiple individual lots of the target biofluid. And the stability of the analyte is established under conditions that mimic sample handling, from the clinic to the instrument.

For research biologics intended for human use, this rigor is not optional. It is the foundation of regulatory trust. The LC-MS/MS method that quantifies a therapeutic protein in a Phase I clinical trial must perform identically whether the sample is analyzed today or six months from now, in St. Louis or in Singapore. Achieving that level of robustness requires more than following a protocol. It requires understanding the physics and chemistry that underlie every step of the workflow—the thermodynamics of digestion, the electrostatics of ionization, the kinetics of immunocapture, and the statistics of calibration.

In the evolving landscape of protein bioanalysis, LC-MS/MS has matured from a niche research tool into a mainstream platform that rivals and often surpasses traditional ligand-binding assays. Its strengths—unmatched selectivity, the ability to distinguish the therapeutic from its endogenous counterpart, and the capacity to multiplex dozens of analytes in a single injection—position it as the method of choice for the next generation of biologic therapeutics. For laboratories seeking to implement these workflows without building infrastructure from scratch, Customized Experiments offer a flexible pathway to robust, validated methods tailored to specific biologic targets and matrices.

FAQ: Frequently Asked Questions

Q1: Why can't I just use SIL-peptide internal standards for all my protein quantification needs?

SIL-peptides correct for post-digestion variability only. If your workflow involves protein extraction, immunocapture, or any pre-digestion sample handling, SIL-peptides cannot account for losses that occur before the spike. For maximum accuracy in complex biofluids like serum or tissue homogenates, SIL-protein internal standards provide more complete correction. The trade-off is cost and availability—SIL-proteins are more expensive to produce and may not be commercially available for novel biologics.

Q2: How do I know if my signature peptide is truly unique?

Perform a BLAST search of the candidate peptide sequence against the complete proteome of the relevant species (human, mouse, rat, etc.). The peptide must have zero matches except to the target protein. Additionally, consider the possibility of cross-reactivity with endogenous immunoglobulins when quantifying human mAbs in human serum. Even a unique peptide may sit on a high background if it shares sequence homology with abundant endogenous proteins.

Q3: What is the most common cause of poor digestion reproducibility?

Incomplete denaturation. If your target protein is a disulfide-rich biologic like an IgG1 mAb, a simple trypsin digestion in ammonium bicarbonate will not fully cleave the protected hinge and Fc regions. Use a two-step Lys-C/trypsin protocol with strong denaturant (8 M urea or 0.1% RapiGest) and include reduction and alkylation steps. Monitor digestion completeness by tracking the release of a second signature peptide from a different region of the protein.

Q4: My calibration curve is linear but the QC samples at high concentration are biased low. What is happening?

This is classic detector saturation. At high ion flux, the electron multiplier cannot respond linearly. Dilute high-concentration QCs before analysis, reduce injection volume, or switch to a less sensitive MRM transition. Validate dilution integrity as part of your method qualification.

Q5: Can I use the same LC-MS/MS method for plasma and serum interchangeably?

Not without validation. Serum and plasma have different matrix compositions—serum lacks fibrinogen and has undergone clotting, which can alter protein binding and release protease activity. Evaluate matrix effects in both matrices using post-column infusion and validate the method separately for each intended matrix.

Q6: How many signature peptides should I monitor per protein?

At least two, preferably three. Monitoring multiple peptides provides internal consistency checks. If the measured concentration from Peptide A is 50 ng/mL and from Peptide B is 5 ng/mL, something is wrong—likely incomplete digestion or peptide-specific matrix interference. The ratio of peptide responses should remain constant across samples.

Q7: What is the difference between SRM and MRM?

Functionally, they are synonymous in modern usage. SRM (selected reaction monitoring) was the original term for monitoring a single precursor-to-product transition on a triple quadrupole. MRM (multiple reaction monitoring) refers to monitoring multiple transitions, either for the same peptide or for multiple peptides. Today, all targeted LC-MS/MS experiments on triple quadrupoles are effectively MRM.

Q8: How do I handle batch-to-batch variability in trypsin activity?

Source high-quality, MS-grade trypsin from a reputable vendor and store it properly. But more importantly, include a digestion QC in every batch—a well-characterized protein standard (e.g., bovine serum albumin) digested in parallel with study samples. Monitoring BSA peptide yields provides a real-time check on digestion efficiency.

Q9: Is it acceptable to use synthetic signature peptides for calibration instead of the intact protein?

Yes, this is common practice and is accepted by regulatory agencies when properly validated. The critical assumption is that the peptide is released from the protein with 100% efficiency. This must be demonstrated empirically by comparing the response of the intact protein digest to the synthetic peptide at equimolar concentrations. If the ratio deviates significantly from 1.0, a correction factor may be applied.

Q10: What is the future of LC-MS/MS for protein biologics?

The trend is toward increased multiplexing, higher throughput, and deeper integration with immunocapture. New technologies such as microflow LC, trapped ion mobility spectrometry (TIMS), and intelligent MRM scheduling are pushing LLOQs lower and dynamic ranges wider. Additionally, the regulatory acceptance of LC-MS/MS for protein biomarkers and therapeutic drug monitoring continues to expand, driven by the technique's inherent selectivity and the ability to avoid anti-drug antibody interference.

References

- ICH M10 Guideline on Bioanalytical Method Validation and Study Sample Analysis. International Council for Harmonisation of Technical Requirements for Pharmaceuticals for Human Use. 2022. https://database.ich.org/sites/default/files/M10_Guideline_Step4_2022_0524.pdf

- Van den Broek I, et al. "LC-MS/MS-based quantification of biotherapeutics: Current status and future perspectives." Bioanalysis. 2022;14(1):5-22. https://doi.org/10.4155/bio-2021-0212

- Shuford CM, et al. "Absolute Protein Quantification by Mass Spectrometry: Not as Simple as Advertised." Analytical Chemistry. 2017;89(14):7406-7415. https://doi.org/10.1021/acs.analchem.7b00858

- Chambers EE, et al. "Systematic evaluation of matrix effects in LC-MS/MS analysis of peptides: A post-column infusion approach to mapping phospholipid-induced ion suppression." Rapid Communications in Mass Spectrometry. 2024;38(3):e9678. https://doi.org/10.1002/rcm.9678

- Pino LK, et al. "The Skyline ecosystem: Informatics for quantitative mass spectrometry proteomics." Mass Spectrometry Reviews. 2024;43(2):211-231. https://doi.org/10.1002/mas.21802

- Zhang Y, et al. "Prediction of peptide collision cross sections and ESI response using deep learning." Journal of Proteome Research. 2025;24(1):156-169. https://doi.org/10.1021/acs.jproteome.4c00632