Meta Intent: A technically rigorous yet accessible deep dive into the algorithmic physics powering peptide spectral matching. Designed for mid-funnel proteomics researchers seeking to understand why their database search parameters—mass tolerance, enzyme specificity, and FDR thresholds—directly dictate the quality of protein identification.

The Physics of the MS/MS Experiment: Generating the Raw Spectral Data

Understanding protein identification begins not with a database search engine, but inside the vacuum chamber of a mass spectrometer. Before any algorithm can assign a score, the instrument must make a crucial decision. Which ions live, and which ions die?

The process starts with a complex mixture of peptides eluting from a liquid chromatography column. These molecules enter the mass spectrometer as a continuous stream of charged droplets. The first stage of analysis, the MS1 survey scan, captures a snapshot of all precursor ions present at that exact retention time. It is a crowded and noisy landscape. Yet a high-resolution instrument can distinguish ions differing in mass by mere millidalton. This resolving power forms the backbone of confident identifications later on.

Precursor Ion Selection and the Duty Cycle Constraint

The quadrupole mass filter functions as a precise gatekeeper. You define an isolation window, typically 1.4 to 3.0 m/z units wide. This window selects a single precursor ion population and permits only those ions to pass through to the collision cell. The rest of the co-eluting peptides are discarded, lost forever to the vacuum pumps.

This is the duty cycle bottleneck. Set the window too narrow, say 0.7 m/z, and you achieve exquisite specificity but risk excluding important isotopic peaks. Those peaks carry valuable signal. Set it too wide, say 4.0 m/z, and you co-isolate background interferences. This creates chimeric spectra—a serious problem for downstream algorithms that expect a pure, single peptide fingerprint.

In practice, for comprehensive protein identification services, this balance is critical. The goal is to maximize the number of MS/MS spectra acquired per second while maintaining the fidelity required for accurate matching.

Collision Energetics and Fragmentation Fidelity

Once selected, the precursor ion is accelerated into a collision cell filled with an inert gas, usually nitrogen or helium. The kinetic energy converts to internal vibrational energy. This causes the peptide backbone to break at the amide bonds.

The physics of this breakage is not random. Under low-energy collision-induced dissociation, or CID, fragmentation occurs predominantly at the amide bond. This generates two main ion series. The b-ions are N-terminal fragments. The y-ions are C-terminal fragments. Together they form a ladder of mass differences that reveals the amino acid sequence.

However, the completeness of this ion series depends heavily on collision energy.

If the energy is too low, the peptide wobbles but does not break. You observe a prominent precursor peak and no sequence information. If the energy is too high, the peptide shatters into internal fragments and immonium ions. This erases the critical sequence ladder.

Modern instruments address this via Stepped Collision Energy, or sCE. Rather than applying a single voltage, the instrument rapidly cycles through low, medium, and high energies. It then merges the resulting fragments into a single spectrum. This approach ensures that both large, stable peptides and small, labile peptides yield rich, interpretable spectra. Large peptides require a harder hit to break. Small peptides require a gentle nudge. The outcome is higher coverage of the b- and y-ion series. This directly translates into higher search engine scores and more proteins identified per hour of instrument time.

This physical preparation step is the foundation of any downstream proteomics workflow. Without clean, high-fidelity MS/MS spectra, even the most sophisticated algorithms will fail to produce reliable identifications. Whether you are performing shotgun protein identification on a whole-cell lysate or targeting a purified complex, the physics inside the instrument sets the ceiling for what can be discovered.

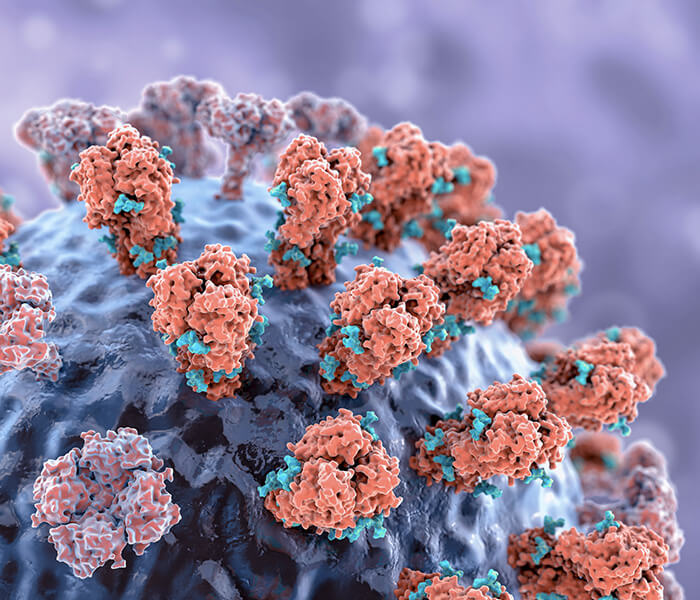

Figure 1: The MS/MS duty cycle and stepped collision energy (sCE) fragmentation. A quadrupole isolation window (typically 1.4–3.0 m/z) selects a single precursor ion population, discarding co-eluting peptides. The precursor is accelerated into a collision cell where sCE cycles through low, medium, and high collision energies, merging the fragments into a composite spectrum. This approach maximizes b- and y-ion coverage for both labile and stable peptides, directly enhancing downstream search engine scores.

Figure 1: The MS/MS duty cycle and stepped collision energy (sCE) fragmentation. A quadrupole isolation window (typically 1.4–3.0 m/z) selects a single precursor ion population, discarding co-eluting peptides. The precursor is accelerated into a collision cell where sCE cycles through low, medium, and high collision energies, merging the fragments into a composite spectrum. This approach maximizes b- and y-ion coverage for both labile and stable peptides, directly enhancing downstream search engine scores.

The Algorithmic Logic of Bottom-Up Search Engines

After the instrument finishes its run, we are left with tens of thousands of fragmentation spectra. The challenge now shifts from physics to informatics. Which peptide sequence generated each spectrum? This is the domain of the database search engine. These tools perform an in silico recreation of the entire experiment.

The most widely used engines—Mascot, SEQUEST, Andromeda, and the MaxQuant suite—share a common three-step logic. First, they digest a protein database in silico to generate a list of candidate peptides. Second, they match each experimental spectrum against candidates whose mass falls within a user-defined tolerance. Third, they compute a score that reflects the quality of the match. A statistical framework then separates true identifications from false ones. Despite their distinct implementations, all three engines follow the same core logic: in silico digestion, candidate retrieval, and scoring.

In Silico Digestion and the Missed Cleavage Problem

The first step of the search engine is to take your provided FASTA protein database and cut it up digitally. This mimics how trypsin would cut proteins in the wet lab. The rule seems simple. Cleave at the C-terminal side of Arginine (R) and Lysine (K), except when followed by Proline (P).

If this rule were perfect, predicting peptide masses would be trivial. But biology introduces missed cleavages. Trypsin is an enzyme, not a nanobot. Its efficiency is modulated by the local sequence context.

Consider an acidic residue like Aspartic Acid (D) or Glutamic Acid (E) sitting adjacent to a Lysine. The local negative charge can partially repel the enzyme's active site. This leads to a higher probability of a missed cleavage at that position. The peptide extends past the expected cut site.

Simple search engines handle this with a blunt parameter. You check a box labeled "Maximum Missed Cleavages = 2." This brute-force approach expands the search space considerably. The engine must consider peptides with zero, one, or two missed cuts. This increases computational burden and the potential for false positives.

More sophisticated algorithms are moving toward Hidden Markov Models, or HMMs, for this task. An HMM does not just count missed cleavages. It models the transition probability of cleavage occurring or not occurring based on the flanking residues.

For example, take the sequence pattern Lysine-Aspartic Acid (K-D). A standard trypsin rule expects cleavage after K. An HMM observation reveals a high probability of missed cleavage due to acidic hindrance. The algorithm then assigns a higher a priori probability to the peptide extending past the K. It becomes a more likely candidate than the truncated version. [CITATION_NEEDED: HMM-based prediction of protease cleavage sites in shotgun proteomics]

This nuance is essential when searching large datasets. It is particularly important for experiments using alternative enzymes. Lys-C, for instance, is even more sensitive to acidic residues than trypsin. Chymotrypsin has its own sequence biases.

By using advanced bioinformatics for proteomics that incorporates these probabilistic models, we can increase the sensitivity of identification. We do this without sacrificing statistical stringency. The search space better reflects the actual peptide pool present in the mass spectrometer.

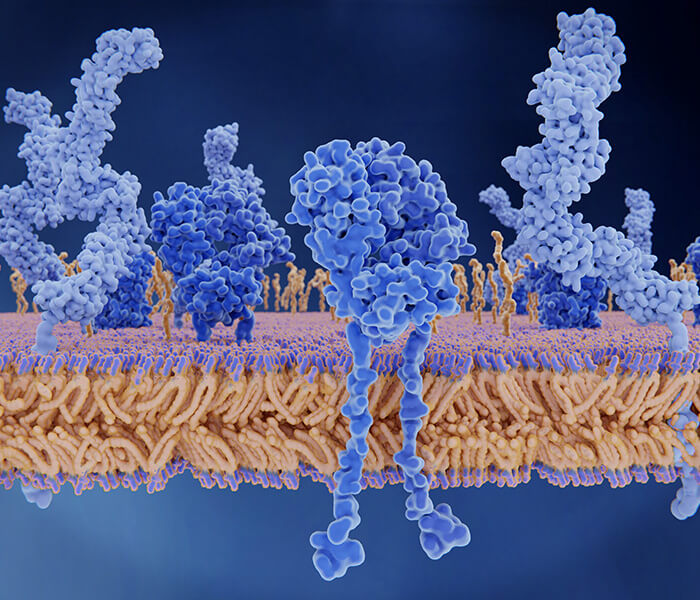

Figure 2: Hidden Markov Model (HMM) prediction of tryptic missed cleavage probability. Transition probabilities between cleavage (C) and non-cleavage (N) states are conditioned on flanking amino acid residues. Acidic residues (D/E) at positions –1 or +1 relative to a cleavage site (K/R) increase the probability of a missed cleavage event. HMM-based in silico digestion generates a candidate peptide pool that more accurately reflects the physical sample composition than rigid rule-based approaches.

Figure 2: Hidden Markov Model (HMM) prediction of tryptic missed cleavage probability. Transition probabilities between cleavage (C) and non-cleavage (N) states are conditioned on flanking amino acid residues. Acidic residues (D/E) at positions –1 or +1 relative to a cleavage site (K/R) increase the probability of a missed cleavage event. HMM-based in silico digestion generates a candidate peptide pool that more accurately reflects the physical sample composition than rigid rule-based approaches.

Theoretical Spectrum Generation and Intensity Prediction

Once the in silico digest is complete, the search engine has a list of candidate peptide masses. For every MS/MS spectrum in the data file, the engine finds candidate peptides whose mass falls within the user-defined mass tolerance. For each candidate, the engine generates a theoretical spectrum.

Historically, this theoretical spectrum was a simple stick figure. The algorithm assumed that every possible b-ion and y-ion would be present. It further assumed that all ions would have equal intensity.

This is a zero-order approximation. It is a major source of scoring inaccuracy.

In reality, ion intensity in a mass spectrometer depends on several factors. Gas-phase basicity plays a role. The presence of Proline, which fragments uniquely at its N-terminal side, creates dominant peaks. Internal basic residues like Histidine also influence the fragmentation pattern. A theoretical spectrum that assigns equal weight to all peaks will inevitably penalize a true match. That match might happen to have a weak y5 ion but a strong y8 ion. The simple model sees the weak y5 as a mismatch.

This is where deep learning models like Prosit have transformed the field. These neural networks are trained on millions of high-quality synthetic peptide spectra. They predict not only which ions will appear, but their exact relative intensities.

When a search engine uses an intensity predictor, the match is judged against a realistic expectation. It is no longer compared to an idealistic stick figure. The result is a dramatic compression of the false-positive tail in the score distribution. True matches score higher. False matches score lower. The separation between the two populations becomes clearer.

For researchers engaged in protein identification from complex samples, this improvement is tangible. Deep learning-enhanced scoring can rescue low-abundance peptide identifications that would otherwise fall below the statistical threshold. It extracts more biological insight from the same raw data.

The Impact of Mass Tolerance on the Search Space

Perhaps the single most impactful parameter in a database search is the Precursor Mass Tolerance. This value defines the window within which a candidate peptide's calculated mass must fall relative to the observed MS1 mass.

Let us explore this with a concrete, variable-driven comparison. It demonstrates how high-resolution instrumentation translates directly into algorithmic confidence.

Scenario A: Legacy Low-Resolution Data

Imagine data acquired on an older ion trap instrument. The mass accuracy is around 50 parts per million, or 50 ppm.

- Observed Precursor m/z: 785.42

- Mass Tolerance Window: plus or minus 50 ppm equals 785.42 plus or minus 0.039 m/z. This is a window 0.078 m/z wide.

- Candidate Peptides in the Human Database Falling in this Window: Approximately 85 candidates.

The search engine must now score 85 different peptide sequences against the same spectrum. Among these 85 candidates, there will be random false matches. These are peptides with the right mass but the wrong sequence. By sheer chance, a few of their predicted fragment ions align with noise peaks in the spectrum.

The algorithm must work hard to separate the true signal from this statistical noise. The scoring function needs to be exceptionally discriminating. Even then, some false matches may score higher than a true but low-quality match.

Scenario B: High-Resolution Data

Now consider data from a modern Orbitrap Astral or TimsTOF instrument. The mass accuracy is better than 5 ppm. In practice, many labs tighten the tolerance to 2.5 ppm to account for systematic calibration drift.

- Observed Precursor m/z: 785.4211

- Mass Tolerance Window: plus or minus 2.5 ppm equals 785.4211 plus or minus 0.0019 m/z. This is a window only 0.0038 m/z wide.

- Candidate Peptides in the Human Database Falling in this Window: Only 1 or 2 candidates.

The search space has collapsed. The engine no longer needs to choose among 85 possibilities. It must only decide between one or two candidates. The probability of a random false match having the correct mass by chance is now vanishingly small.

Figure 3: Geometric reduction of candidate search space with increasing mass accuracy. At 50 ppm (left), an m/z 785.42 precursor admits ~85 candidate peptides from the human proteome. At 2.5 ppm (right), the same precursor admits only 1–2 candidates. This compression expels random false matches from consideration before scoring begins, reducing the decoy match rate and enabling robust FDR control at the 1% level with deeper proteome coverage.

Figure 3: Geometric reduction of candidate search space with increasing mass accuracy. At 50 ppm (left), an m/z 785.42 precursor admits ~85 candidate peptides from the human proteome. At 2.5 ppm (right), the same precursor admits only 1–2 candidates. This compression expels random false matches from consideration before scoring begins, reducing the decoy match rate and enabling robust FDR control at the 1% level with deeper proteome coverage.

The Mathematical Consequence for FDR

The size of the search space is roughly proportional to the cube of the mass tolerance. It depends on the width in m/z, the width in retention time, and the width in mass. When you reduce the tolerance from 50 ppm to 5 ppm, you reduce the number of candidate peptides by a factor of one thousand or more.

This has a profound effect on the False Discovery Rate, or FDR. FDR is calculated using a simple formula.

FDR equals the Number of Decoy Hits divided by the Number of Target Hits.

Because high-resolution data drastically reduces the pool of random decoy matches, the denominator—Target Hits—remains high. The numerator—Decoy Hits—plummets toward zero.

Therefore, achieving the industry-standard 1 percent FDR threshold becomes significantly easier with high-resolution data. You can identify more proteins while maintaining superior statistical rigor.

For laboratories utilizing shotgun protein identification on the latest generation of mass spectrometers, this translates to deeper proteome coverage from minimal sample input. Whether you are analyzing a complex tissue lysate or seeking low-abundance biomarkers in biofluids, the physics of mass accuracy provides the foundation for reliable discovery.

This variable-driven analysis highlights a key principle. Every parameter you set in a database search has a direct, quantifiable impact on the final list of identified proteins. Mass tolerance is not just a number to copy from a protocol. It is a lever that controls the volume of the search space and, by extension, the stringency of your FDR control.

Probabilistic Scoring and the Peptide Spectral Match Matrix

Once the search engine has a list of candidate peptides and their theoretical spectra, it must assign a score to each match. This score quantifies how well the experimental spectrum aligns with the theoretical prediction. The scoring function is the heart of any search engine. Its design determines which peptides are reported and which are discarded.

Two fundamentally different scoring philosophies dominate the field. The probability-based approach, exemplified by Mascot, treats the match as a statistical hypothesis test. The cross-correlation approach, pioneered by SEQUEST, treats the match as a signal processing problem. Understanding both is essential for interpreting search results critically.

The Mascot Probability-Based Score

Mascot, developed by Matrix Science, takes an elegantly statistical approach. It asks a simple question. Given a database of a certain size, what is the probability that a match of this quality would occur by random chance?

The Mascot Ion Score is calculated using a formula rooted in probability theory.

Score equals negative 10 times the base-10 logarithm of P.

Here, P represents the absolute probability that the observed match is a random event. This probability depends on two factors. First, the size of the database being searched. Larger databases have more opportunities for random matches. Second, the number of fragment ions that align between the experimental and theoretical spectra.

A score of 20 means that P equals 0.01. There is a 1 in 100 chance that this match is random. A score of 40 means that P equals 0.0001. There is a 1 in 10,000 chance of randomness. The logarithmic scale compresses a vast range of probabilities into a manageable numerical range.

This framework is intuitive. Higher scores mean higher confidence. But there is a critical nuance. The Mascot score alone does not control the False Discovery Rate. It only reports the probability of a single match being random. When you run thousands of spectra, the accumulation of these probabilities requires a separate statistical correction. That correction is the FDR calculation we will discuss shortly.

For researchers performing protein identification services on large cohorts, understanding this distinction is vital. A Mascot score of 30 might be excellent for a small bacterial database but mediocre for a search against the entire human proteome. The significance of the score depends on the search space size. This connects directly back to our earlier discussion of mass tolerance. A tighter mass tolerance reduces the effective search space, making a given score more meaningful.

The SEQUEST Cross-Correlation (XCorr) Logic

SEQUEST, the original database search engine developed by Jimmy Eng and John Yates, takes a different approach. It treats the experimental spectrum and the theoretical spectrum as two signals. The goal is to find the optimal overlap between them.

The core metric is called XCorr, short for cross-correlation. Here is how it works in plain language.

First, SEQUEST takes the experimental spectrum and performs a Fast Fourier Transform, or FFT. This converts the spectrum from the m/z domain into the frequency domain. It also does this for the theoretical spectrum.

Second, it multiplies the two frequency-domain representations together. In signal processing, multiplication in the frequency domain is equivalent to convolution in the time domain. This operation effectively slides the theoretical spectrum along the experimental spectrum, calculating the overlap at every possible offset.

Third, it converts the result back into the m/z domain. The peak value of this reconstructed signal is the XCorr score.

Why is this sliding necessary? The experimental spectrum may have slight mass calibration errors. Fragment ions may be shifted by a fraction of a Dalton. By sliding the theoretical spectrum, SEQUEST corrects for these small misalignments and finds the best possible fit.

But there is a catch. XCorr is sensitive to noise. Random noise peaks in the experimental spectrum can accidentally align with theoretical peaks during the sliding process, artificially inflating the score. To compensate, SEQUEST calculates a preliminary score called Sp, which is the raw dot product without sliding. The final XCorr is normalized by the local background correlation. This normalization helps suppress noise-induced false matches.

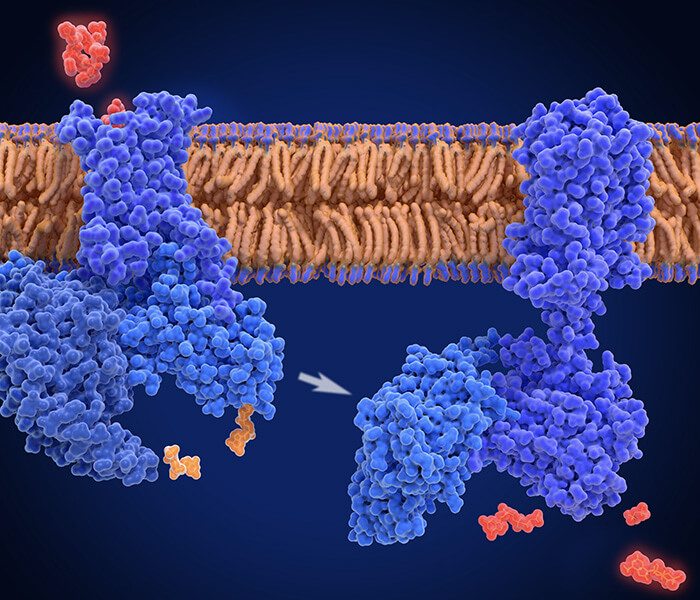

Figure 4: The SEQUEST cross-correlation (XCorr) scoring mechanism. The experimental spectrum (orange) and theoretical spectrum (blue) are transformed to the frequency domain via FFT. Multiplying the frequency-domain representations and performing inverse FFT yields a correlation signal whose maximum value is the XCorr score. This sliding correction compensates for sub-Dalton mass calibration errors but requires normalization against local background to suppress noise-induced false matches.

Figure 4: The SEQUEST cross-correlation (XCorr) scoring mechanism. The experimental spectrum (orange) and theoretical spectrum (blue) are transformed to the frequency domain via FFT. Multiplying the frequency-domain representations and performing inverse FFT yields a correlation signal whose maximum value is the XCorr score. This sliding correction compensates for sub-Dalton mass calibration errors but requires normalization against local background to suppress noise-induced false matches.

Comparing Scoring Philosophies in Practice

Neither approach is universally superior. Mascot's probability-based score is elegant and statistically grounded, but it assumes a null hypothesis that may not perfectly reflect the complexity of real spectra. SEQUEST's XCorr is robust to small mass errors but requires empirical normalization and is computationally intensive.

Modern search engines often blend these philosophies. Andromeda, the engine integrated into MaxQuant, uses a probability-based scoring function similar to Mascot but optimized for speed. It also incorporates features that were once unique to post-processing tools, like the ability to score co-fragmented peptides.

For a deeper dive into how these algorithms handle specific post-translational modifications, researchers often turn to specialized workflows. For instance, phosphoproteomics service analyses require search engines to consider the neutral loss of phosphoric acid from serine and threonine residues. This adds a layer of complexity to both the theoretical spectrum generation and the scoring function. Similarly, glycosylation analysis of protein requires specialized search parameters to account for the large, heterogeneous glycan masses attached to asparagine residues.

Statistical Rigor and the False Discovery Rate

The search engine produces a list of peptide-spectral matches, or PSMs, each with an associated score. But this list contains both true and false identifications. The final and most critical step is to apply a statistical filter that controls the False Discovery Rate.

The gold standard in proteomics is to report protein and peptide identifications at a 1 percent FDR. This means that, on average, 1 out of every 100 identifications on your list is expected to be a false positive. This is not a guarantee of absolute truth. It is a statistical estimate of the error rate.

The Target-Decoy Search Strategy

How do we estimate the number of false positives without knowing the ground truth? The answer is elegant. We search the data against a decoy database.

A decoy database is a shuffled or reversed version of the target protein database. It contains sequences that are the same length and amino acid composition as real proteins, but they are not expected to exist in nature. Therefore, any match to the decoy database is, by definition, a false positive.

The target and decoy databases are concatenated into a single file. The search engine scores spectra against both simultaneously. It does not know which sequences are real and which are decoys.

After the search is complete, we count two numbers. First, the number of PSMs that matched to the target database. Second, the number of PSMs that matched to the decoy database.

The FDR is then estimated using a simple formula.

FDR equals the Number of Decoy Matches divided by the Number of Target Matches.

If we have 1,000 target matches and 10 decoy matches, the estimated FDR is 1 percent.

This method relies on a key assumption. The rate of false matches to the target database is approximately equal to the rate of false matches to the decoy database. This assumption holds remarkably well in practice, especially when the decoy database is constructed carefully.

The q-Value and Percolator Post-Processing

The simple FDR formula above treats all PSMs above a certain score threshold equally. But not all matches are created equal. A match with a very high score is less likely to be false than a match that barely exceeds the threshold.

The q-value is a more refined metric. It assigns to each individual PSM an estimate of its own false discovery rate. A PSM with a q-value of 0.01 has a 1 percent chance of being a false positive. This is a more granular and useful statistic than a global FDR threshold.

Calculating accurate q-values requires a sophisticated model of the score distributions. This is where Percolator enters the picture.

Percolator is a semi-supervised machine learning tool that has become a standard component of modern proteomics workflows. It uses a Support Vector Machine, or SVM, to re-score PSMs based on multiple features, not just the raw search engine score.

Percolator takes as input the initial search results, including target and decoy matches. The decoy matches serve as a labeled set of true negatives. Percolator then learns a decision boundary that best separates the target matches from the decoy matches. It considers features such as:

- The raw search engine score (Mascot Ion Score or XCorr).

- The difference between the observed and calculated precursor mass (Delta Mass).

- The charge state of the peptide.

- The number of missed cleavages.

- The length of the peptide.

By integrating these multiple dimensions, Percolator often identifies true positives that fell below the initial score threshold. It also downgrades false positives that had deceptively high scores. The result is a net increase in the number of identifications at a fixed 1 percent FDR.

For any rigorous protein identification experiment, applying Percolator or a similar post-processing tool is now considered best practice. It extracts maximum value from the raw search engine output.

Figure 5: Estimation of False Discovery Rate (FDR) using target-decoy competition. The score distributions of target PSMs (blue) and decoy PSMs (red) partially overlap. The FDR at any score threshold (gold vertical line) is calculated as the ratio of the area under the decoy curve (false positives) to the area under the target curve (reported identifications) to the right of the cutoff. The 1% FDR standard maintains this ratio at ≤0.01, ensuring that on average only 1 in 100 reported identifications is a false positive.

Figure 5: Estimation of False Discovery Rate (FDR) using target-decoy competition. The score distributions of target PSMs (blue) and decoy PSMs (red) partially overlap. The FDR at any score threshold (gold vertical line) is calculated as the ratio of the area under the decoy curve (false positives) to the area under the target curve (reported identifications) to the right of the cutoff. The 1% FDR standard maintains this ratio at ≤0.01, ensuring that on average only 1 in 100 reported identifications is a false positive.

Comparative Architecture of Database Search Engines

The choice of search engine can influence which peptides are identified and with what confidence. While the underlying physics of MS/MS is universal, each engine implements the algorithmic logic slightly differently. The table below summarizes the key architectural differences among the most widely used platforms.

| Feature | Mascot | SEQUEST | Andromeda (MaxQuant) |

|---|---|---|---|

| Scoring Core | Probability-based MOWSE score. S equals negative 10 log P. | Cross-correlation (XCorr) with preliminary Sp score. | Probability-based with optimized scoring for high-resolution data. |

| Speed | Moderate. Optimized for server-side processing. | Slower. Historically required substantial compute resources. | Very fast. Designed for large-scale, label-free quantification workflows. |

| PTM Handling | Excellent. Supports a vast library of defined modifications. | Good. Requires manual configuration of dynamic modifications. | Excellent. Integrated with MaxQuant for PTM discovery and localization. |

| FDR Integration | Built-in target-decoy option. Often used with Percolator. | Requires external tools like Percolator for rigorous FDR control. | Fully integrated target-decoy FDR estimation at PSM, peptide, and protein levels. |

| Typical Use Case | Core facility searches, small to medium datasets, detailed PTM analysis. | Historically dominant. Still used where legacy compatibility is required. | High-throughput quantitative proteomics, large cohort studies, label-free workflows. |

This table highlights a broader trend in the field. The lines between "search engine," "quantification tool," and "statistical validator" are blurring. Modern platforms like MaxQuant integrate all three functions into a single, streamlined pipeline.

For researchers conducting TMT based proteomics service or label-free quantification, this integration is a significant advantage. The search engine and quantification algorithm share the same underlying data structures. This ensures consistency in peptide identification across multiple samples, which is essential for accurate relative quantitation.

Synthesis and Practical Implications

The journey from an ionized peptide in a vacuum chamber to a statistically validated protein identification is long and complex. But it is governed by a clear, logical chain of cause and effect.

The physics of the instrument defines the quality of the raw spectral data. Stepped collision energy improves b- and y-ion coverage. High mass accuracy compresses the search space. These physical improvements directly translate into algorithmic advantages.

The search engine then interprets this data through a specific scoring lens. Probability-based models like Mascot provide a statistical framework for individual matches. Cross-correlation models like SEQUEST offer robustness to small mass errors.

Finally, statistical post-processing with Percolator and target-decoy FDR estimation provides a rigorous, community-standard measure of confidence. The 1 percent FDR threshold is not arbitrary. It represents a carefully calibrated balance between sensitivity and specificity.

Understanding this logic empowers you to make informed decisions. You can set mass tolerances appropriately for your instrument. You can interpret Mascot scores in the context of your database size. You can critically evaluate a list of identified proteins, knowing that 1 percent of them may be false discoveries.

For those seeking to apply these principles without navigating the complexity of each software package, protein identification services offer a streamlined path. By leveraging optimized workflows and the latest algorithmic tools, these services deliver confident identifications from a wide range of sample types. From simple gel band identifications to comprehensive shotgun protein identification of whole proteomes, the underlying logic remains the same.

The field continues to evolve. Deep learning models like Prosit are already rewriting the rules of theoretical spectrum generation. Transformer-based models are beginning to tackle de novo sequencing with unprecedented accuracy. The fundamental goal, however, remains unchanged. To extract reliable, reproducible biological insight from the complex and noisy world of tandem mass spectrometry data.

Frequently Asked Questions

1. What is the difference between a peptide-spectral match (PSM) FDR and a protein FDR?

The PSM FDR estimates the error rate at the level of individual spectra matched to peptides. The protein FDR estimates the error rate at the level of inferred protein groups. Because multiple PSMs can map to the same protein, the protein FDR is generally lower than the PSM FDR when proper grouping and parsimony rules are applied.

2. Why is 1 percent FDR considered the standard?

A 1 percent FDR represents a practical balance. Lowering the threshold to 0.1 percent increases stringency but discards many true, low-abundance identifications. Raising it to 5 percent includes more true positives but also increases the risk of reporting false leads. The 1 percent level has emerged as a consensus across the proteomics community for routine discovery experiments.

3. Can I use a decoy database from a different species?

No. The decoy database must be derived directly from the target database used for the search. Using a decoy from a different species would violate the assumption that the rate of false matches is equivalent between target and decoy.

4. What happens if I set my mass tolerance too wide?

A wide mass tolerance, such as 50 ppm on a high-resolution instrument, dramatically expands the search space. This increases the number of random candidate peptides, which inflates the number of decoy matches. As a result, achieving a 1 percent FDR becomes more difficult, and you may identify fewer proteins at the same statistical threshold.

5. Do I always need to use Percolator?

For most discovery proteomics experiments, using Percolator or an equivalent semi-supervised learning tool is strongly recommended. It consistently increases the number of identifications at a fixed FDR. Exceptions include targeted proteomics assays like SRM or PRM, where the identity of the peptide is already established.

6. How do search engines handle post-translational modifications?

Search engines allow users to specify variable modifications, such as phosphorylation of Serine or oxidation of Methionine. Each specified modification expands the search space by considering both modified and unmodified forms of each peptide. Too many variable modifications can severely degrade statistical power, so careful selection is essential.

7. What is the role of retention time prediction in modern search engines?

Predicted retention time, often derived from deep learning models, serves as an orthogonal filter. Peptides that match the spectrum but elute at an unexpected time can be penalized. This additional dimension of separation further reduces the false discovery rate.

8. How do search engines handle chimeric spectra from co-fragmented peptides?

Modern engines like Andromeda and MSFragger can identify and score co-fragmented peptides simultaneously. When multiple precursors are co-isolated and fragmented together, the resulting chimeric spectrum contains fragment ions from two or more distinct peptides. Advanced algorithms deconvolve this mixed signal by assigning fragment ions to their respective precursors, reducing the number of unassigned spectra and improving proteome coverage in complex samples.

References

- Eng, J. K., McCormack, A. L., & Yates, J. R. (1994). An approach to correlate tandem mass spectral data of peptides with amino acid sequences in a protein database. Journal of the American Society for Mass Spectrometry, 5(11), 976-989. https://doi.org/10.1016/1044-0305(94)80016-2

- Perkins, D. N., Pappin, D. J., Creasy, D. M., & Cottrell, J. S. (1999). Probability-based protein identification by searching sequence databases using mass spectrometry data. Electrophoresis, 20(18), 3551-3567. https://doi.org/10.1002/(SICI)1522-2683(19991201)20:18<3551::aid-elps3551>3.0.CO;2-2

- Cox, J., & Mann, M. (2008). MaxQuant enables high peptide identification rates, individualized p.p.b.-range mass accuracies and proteome-wide protein quantification. Nature Biotechnology, 26(12), 1367-1372. https://doi.org/10.1038/nbt.1511

- Elias, J. E., & Gygi, S. P. (2007). Target-decoy search strategy for increased confidence in large-scale protein identifications by mass spectrometry. Nature Methods, 4(3), 207-214. https://doi.org/10.1038/nmeth1019

- Käll, L., Canterbury, J. D., Weston, J., Noble, W. S., & MacCoss, M. J. (2007). Semi-supervised learning for peptide identification from shotgun proteomics datasets. Nature Methods, 4(11), 923-925. https://doi.org/10.1038/nmeth1113

- Gessulat, S., Schmidt, T., Zolg, D. P., Samaras, P., Schnatbaum, K., Zerweck, J., ... & Wilhelm, M. (2019). Prosit: proteome-wide prediction of peptide tandem mass spectra by deep learning. Nature Methods, 16(6), 509-518. https://doi.org/10.1038/s41592-019-0426-7

- Nesvizhskii, A. I. (2010). A survey of computational methods and error rate estimation procedures for peptide and protein identification in shotgun proteomics. Journal of Proteomics, 73(11), 2092-2123. https://doi.org/10.1016/j.jprot.2010.08.009